This month, Andrea Bonime-Blanc brought the Anthropic-OpenAI-Pentagon dispute to the Ripped From the Headlines Salon, unpacking its implications for AI governance, national security, and corporate responsibility. Members debated what this unprecedented standoff means for the future of AI regulation.

Let us know what you think on the LinkedIn version of this newsletter!

Our latest monthly Athena Alliance Ripped from the Headlines salon focused on discussing the implications of the Anthropic-OpenAI US Government Department of Defense or War (DOD) standoff, which was voted as the top topic for the month of April 2026.

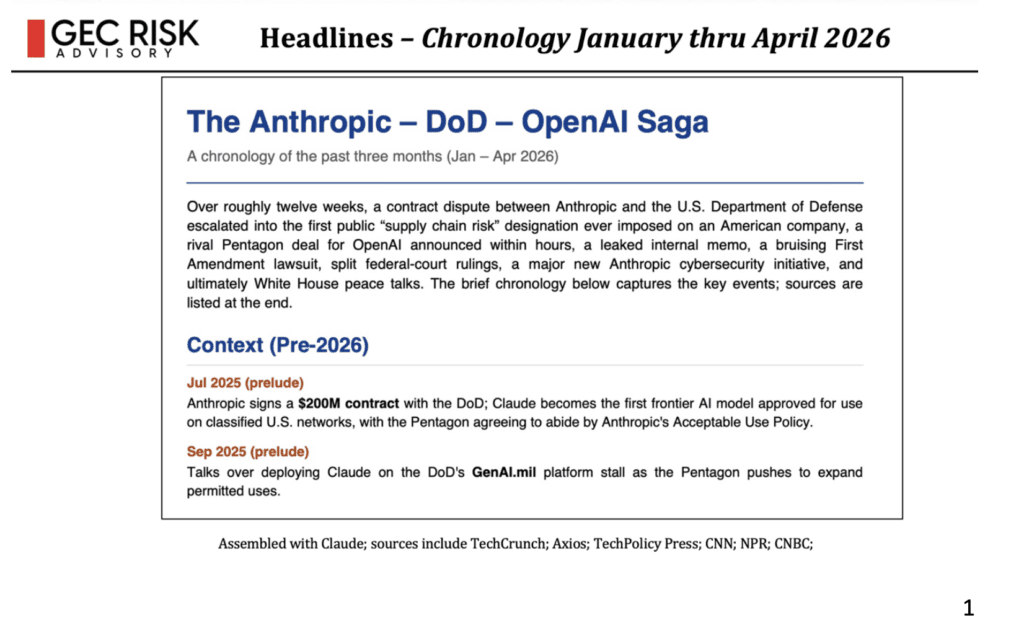

I presented a comprehensive chronology of the conflict, which began with the DOD designating Anthropic as a supply chain risk and culminated in Anthropic’s unveiling of Project Glasswing, a cybersecurity initiative involving thousands of zero-day vulnerabilities.

I presented news items from reliable news sources, including the New York Times, the Wall Street Journal, the Economist, the Financial Times, Axios, TechCrunch, CNN, CNBC, and NPR, following the recent confrontation between Anthropic and OpenAI regarding defense contracts with the U.S. Department of Defense. I outlined the chronology of events, including Anthropic’s designation as a supply chain risk and OpenAI’s subsequent deal with the Pentagon.

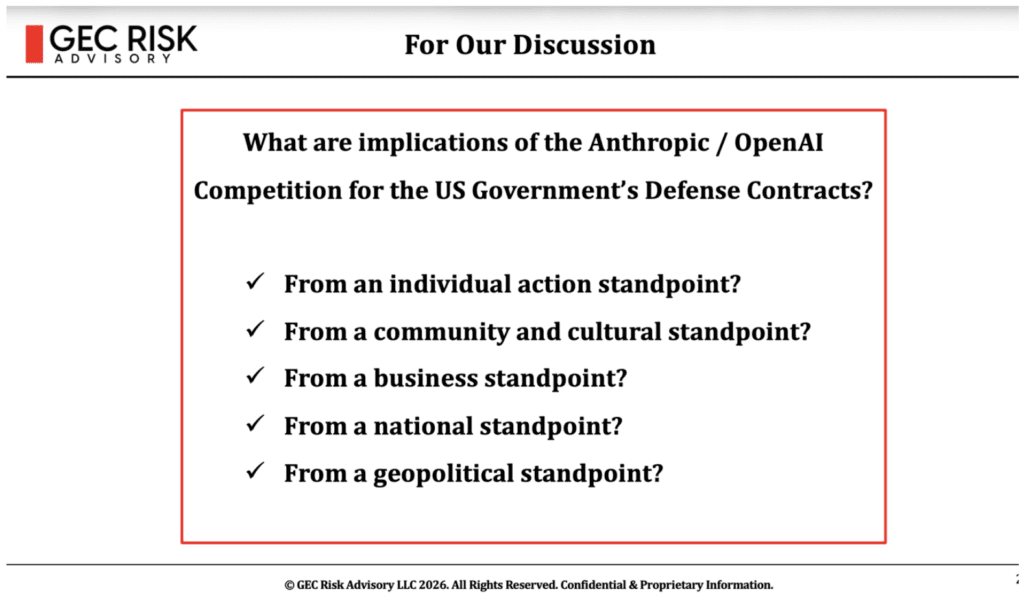

The key question we addressed is the one encapsulated in the graphic below.

The discussion covered various aspects, including military and national security implications, commercial regulation needs, personal privacy concerns, and the role of capitalism in the AI industry.

Participants debated the necessity of regulation, the capabilities of current regulators to oversee rapidly evolving technology, and the influence of key figures in the AI space, with particular concern about five individuals who control major AI companies.

The conversation also touched on the privacy paradox, the lack of comprehensive privacy rights in the United States compared to the EU, and the potential for international cooperation on AI regulation.

The discussion highlighted the cultural and philosophical differences between the two AI companies, with Dario Amodei of Anthropic emphasizing safety and regulatory guardrails, while Sam Altman of OpenAI pursued a more opportunistic approach. The discussion also touched on the broader implications of these developments for national security, international cooperation, and individual privacy. We also briefly discussed the need for policy frameworks to govern AI development and the potential risks of autonomous weapons and surveillance technologies.

The group discussed the need for regulation and guardrails around AI development, particularly focusing on military, commercial, and personal use applications. One participant emphasized the importance of international treaties similar to non-proliferation agreements to govern cyber warfare and AI tools, while also highlighting the need for regulation of commercial AI use and personal data privacy.

Another participant noted that some states, like New York and California, are attempting to implement regulations, and shared insights about political candidates being targeted by dark money groups opposing AI guardrails, illustrating the growing influence of AI-related interests in electoral politics.

The group discussed the balance between capitalism and regulation, particularly in the context of AI and vendor contracts. One participant provocatively questioned the limits of vendor control over product usage and raised concerns about voter sentiment ahead of the midterms.

The discussion evolved into a debate about the need for regulation in capitalism, with participants agreeing that appropriate regulations are necessary to ensure safety and ethical standards, similar to requirements like wearing seatbelts. The conversation touched on the tension between federal and state-level AI regulations, with concerns raised about the federal government’s current approach to AI safety protections.

The group discussed challenges with AI regulation and privacy rights in the United States. One participant highlighted the need for stronger privacy regulations to control the fuel driving AI systems, while another participant noted the lack of comprehensive federal privacy legislation in the US compared to the EU.

A participant raised concerns about regulators’ capacity to keep pace with rapidly evolving technology, and another one suggested drawing lessons from historical regulation of information and media monopolies.

The discussion also touched on concerns about tech company leadership, with a participant expressing worry about CEO influence and the potential for AI models to replicate corporate decision-making styles.